I recently read Weapons of Math Destruction by Dr. Cathy O’Neil and found it an enormously frustrating book. It’s not that whole book was rubbish – that would have made things easy. No, the real problem with this book is that the crap and the pearls were so closely mixed that I had to stare at every sentence very, very carefully in hopes of figuring out which one each was. There’s some good stuff in here. But much of Dr. O’Neil’s argumentation relies on two new (to me) fallacies. It’s these fallacies (which I’ve dubbed the Ought-Is Fallacy and the Availability Bait-and-Switch) that I want to explore today.

Ought-Is Fallacy

It’s a commonly repeated truism that “correlation doesn’t imply causation”. People who’ve been around the statistics block a bit longer might echo Randall Monroe and retort that “correlation doesn’t imply causation, but it does waggle its eyebrows suggestively and gesture furtively while mouthing ‘look over there’”. Understanding why a graph like this:

Is utter horsecrap1, despite how suggestive it looks is the work of a decent education in statistics. Here correlation doesn’t imply causation. On the other hand, it’s not hard to find excellent examples where correlation really does mean causation:

When trying to understand the ground truth, it’s important that you don’t confuse correlation with causation. But not every human endeavour is aimed at determining the ground truth. Some endeavours really do just need to understand which activities and results are correlated. Principal among these is insurance.

Let’s say I wanted to sell you “punched in the face” insurance. You’d pay a small premium every month and if you were ever punched in the face hard enough to require dental work, I’d pay you enough to cover it2. I’d probably charge you more if you were male, because men are much, much more likely to be seriously injured in an assault than women are.

I’m just interested in pricing my product. It doesn’t actually matter if being a man is causal of more assaults or just correlated with it. It doesn’t matter if men aren’t inherently more likely to assault and be assaulted compared to women (for a biological definition of “inherently”). It doesn’t matter what assault rates would be like in a society without toxic masculinity. One thing and one thing alone matters: on average, I will have to pay out more often for men. Therefore, I charge men more.

If you were to claim that because there may be nothing inherent in maleness that causes assault and being assaulted, therefore men shouldn’t have to pay more, you are making a moral argument, not an empirical one. You are also committing the ought-is fallacy. Just because your beliefs tell you that some aspect of the world should be a certain way, or that it would be more moral for the world to be a certain way, does not mean the world actually is that way or that everyone must agree to order the world as if that were true.

This doesn’t prevent you from making a moral argument that we should ignore certain correlates in certain cases in the interest of fairness, merely that you should not be making an empirical argument about what is ultimately values.

The ought-is fallacy came up literally whenever Weapons of Math Destruction talked about insurance, as well as when it talked about sentencing disparities. Here’s one example:

But as the questions continue, delving deeper into the person’s life, it’s easy to imagine how inmates from a privileged background would answer one way and those from tough inner-city streets another. Ask a criminal who grew up in comfortable suburbs about “the first time you were ever involved with the police,” and he might not have a single incident to report other than the one that brought him to prison. Young black males, by contrast, are likely to have been stopped by police dozens of times, even when they’ve done nothing wrong. A 2013 study by the New York Civil Liberties Union found that while black and Latino males between the ages of fourteen and twenty-four made up only 4.7 percent of the city’s population, they accounted for 40.6 percent of the stop-and-frisk checks by police. More than 90 percent of those stopped were innocent. Some of the others might have been drinking underage or carrying a joint. And unlike most rich kids, they got in trouble for it. So if early “involvement” with the police signals recidivism, poor people and racial minorities look far riskier.

Now I happen to agree with Dr. O’Neil that we should not allow race to end up playing a role in prison sentence length. There are plenty of good things to include in a sentence length: seriousness of crime, remorse, etc. I don’t think race should be one of these criteria and since the sequence of events that Dr. O’Neil mentions make this far from the default in the criminal justice system, I think doing more to ensure race stays out of sentencing is an important moral responsibility we have as a society.

But Dr. O’Neil’s empirical criticism of recidivism models is entirely off base. In this specific example, she is claiming that some characteristics that correlate with recidivism should not be used in recidivism models even though they improve the accuracy, because they are not per se causative of crime.

Because of systematic racism and discrimination in policing3, the recidivism rate among black Americans is higher. If the only thing you care about is maximizing the prison sentence of people who are most likely to re-offend, then your model will tag black people for longer sentences. It does not matter what the “cause” of this is! Your accuracy will still be higher if you take race into account.

To say “black Americans seem to have a higher rate of recidivism, therefore we should punish them more heavily” is almost to commit the opposite fallacy, the is-ought. Instead, we should say “yes, empirically there’s a high rate of recidivism among black Americans, but this is probably caused by social factors and regardless, if we don’t want to create a population of permanently incarcerated people, with all of the vicious cycle of discrimination that this creates, we should aim for racial parity in sentencing”. This is a very strong (and I think persuasive) moral claim4.

It certainly is more work to make a complicated moral claim that mentions the trade-offs we must make between punishment and fairness (or between what is morally right and what is expedient) than it is to make a claim that makes no reference to these subtleties. When we admit that we are sacrificing accuracy in the name of fairness, we do open up an avenue for people to attack us.

Despite this disadvantage, I think keeping our moral and empirical claims separate is very important. When you make the empirical claim that “being black isn’t causative of higher rates of recidivism, therefore the models are wrong when they rank black Americans as more likely to reoffend”, instead of the corresponding ethical claim, then you are making two mistakes. First, there’s lots of room to quibble about what “causative” even means, beyond simple genetic causation. Because you took an empirical and not ethical position, you may have to fight any future evidence to the contrary of your empirical position, even if the evidence is true; in essence, you risk becoming an enemy of the truth. If the truth becomes particularly obvious (and contrary to your claims) you risk looking risible and any gains you achieved will be at risk of reversal.

Second, I would argue that it is ridiculous to claim that universal human rights must rest on claims of genetic identicalness between all groups of people (and trying to make the empirical claim above, rather than a moral claim implicitly embraces this premise). Ashkenazi Jews are (on average) ) about 15 IQ points ahead of other groups. Should we give them any different moral worth because of this? I would argue no5. The only criteria for full moral worth as a human and all universal rights that all humans are entitled to is being human.

As genetic engineering becomes possible, it will be especially problematic to have a norm that moral worth of humans can be modified by their genetic predisposition to pro-social behaviour. Everyone, but most especially the left, which views diversity and flourishing as some of its most important projects should push back against both the is-ought and ought-is fallacies and fight for an expansive definition of universal human rights.

Availability Bait-and-Switch

Imagine someone told you the following story:

The Fair Housing Act has been an absolute disaster for my family! My brother was trying to sublet his apartment to a friend for the summer. Unfortunately, one of the fair housing inspectors caught wind of this and forced him to put up notices that it was for rent. He had to spend a week showing random people around it and some snot-nosed five-year-old broke one of his vases while he was showing that kid's mother around. I know there were problems before, but is the Fair Housing Act really worth it if it can cause this?

Most people would say the answer to the above is “yes, it really was worth it, oh my God, what is wrong with you?”

But it’s actually hard to think that. Because you just read a long, vivid, easily imaginable example of what exactly was wrong with the current regime and a quick throw away reference to there being problems with the old way things were done. Some people might say that it’s better to at least mention that the other way of doing things had its problems too. I disagree strenuously.

When you make a throw-away reference to problems with another way of doing things, while focusing all of your descriptive effort on the problems of the current way (or vice-versa), you are committing the Availability Bait-and-Switch. And you are giving a very false illusion of balance; people will remember that you mentioned both had problems, but they will not take this away as their impression. You will have tricked your readers into thinking you gave a balanced treatment (or at least paved the way for a defence against claims that you didn’t give a balanced treatment) while doing nothing of the sort!

We are all running corrupted hardware. One of the most notable cognitive biases we have is the availability heuristic. We judge probabilities based on what we can easily recall, not on any empirical basis. If you were asked “are there more words in the average English language book that start with k, or have k as the third letter?”, you’d probably say “start with k!”6. In fact, words with “k” as the third letter show up more often. But these words are harder to recall and therefore much less available to your brain.

If I were to give you a bunch of very vivid examples of how algorithms can ruin your life (as Dr. O’Neil repeatedly does, most egregiously in chapters 1, 5, and 8) and then mention off-hand that human decision making also used to ruin a lot of people’s lives, you’d probably come out of our talk much more concerned with algorithms than with human decision making. This was a thing I had to deliberately fight against while reading Weapons of Math Destruction.

Because for a book about how algorithms are destroying everything, there was a remarkable paucity of data on this destruction. I cannot recall seeing any comparative analysis (backed up by statistics, not anecdotes) of the costs and benefits of human decision making and algorithmic decision making, as it applied to Dr. O’Neil’s areas of focus. The book was all the costs of one and a vague allusion to the potential costs of the other.

If you want to give your readers an accurate snapshot of the ground truth, your examples must be representative of the ground truth. If algorithms cause twice as much damage as human decision making in certain circumstances (and again, I’ve seen zero proof that this is the case) then you should interleave every two examples of algorithmic destruction with one of human pettiness. As long as you aren’t doing this, you are lying to your readers. If you’re committed to lying, perhaps for reasons of pithiness or flow, then drop the vague allusions to the costs of the other way of doing things. Make it clear you’re writing a hatchet job, instead of trying to claim epistemic virtue points for “telling both sides of the story”. At least doing things that way is honest7.

-

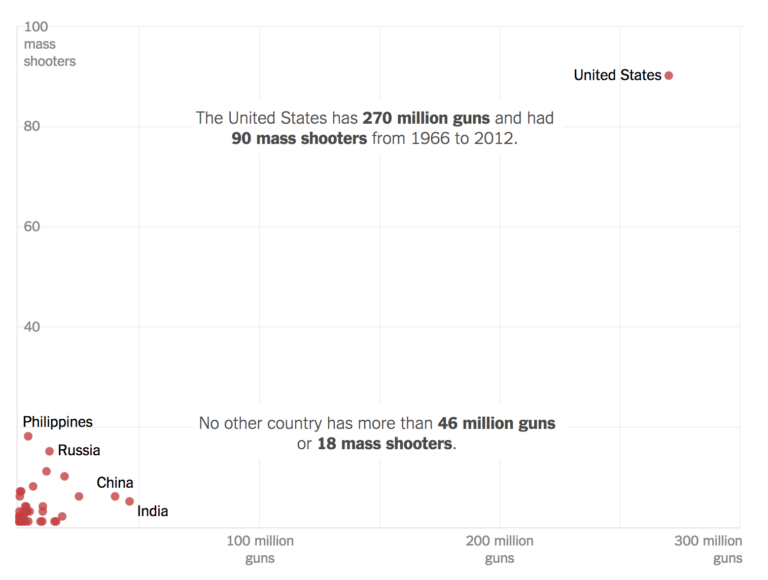

This is a classic example of “anchoring”, a phenomenon where you appear to have a strong correlation in a certain direction because of a single extreme point. When you have anchoring, it’s unclear how generalizable your conclusion is – as the whole direction of the fit could be the result of the single extreme point.

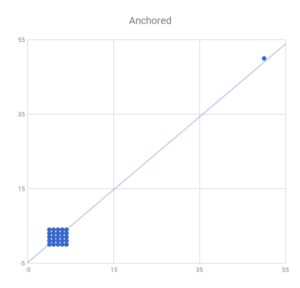

Here’s a toy example:

Note that the thing that makes me suspicious of anchoring here is that we have a big hole with no data and no way of knowing what sort of data goes there (it’s not likely we can randomly generate a bunch of new countries and plot their gun ownership and rate of mass shootings). If we did some more readings (ignoring the fact that in this case we can’t) and got something like this:

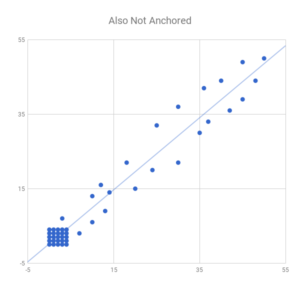

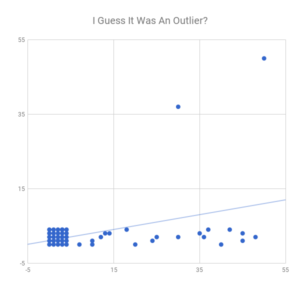

I would no longer be worried about anchoring. It really isn’t enough just to look at the correlation coefficient either. The image labelled “Also Not Anchored” has a marginally lower correlation coefficient than the anchored image, even though (I would argue) it is FAR more likely to represent a true positive correlation. Note also we have no way to tell that more data will necessarily give us a graph like the third. We could also get something like this:

In which we have a fairly clear trend of noisy data with an average of 2.5 irrespective of our x-value and a pair of outliers driving a slight positive correlation.

Also, the NYT graph isn’t normalized to population, which is kind of a WTF level mistake. They include another graph that is normalized later on, but the graph I show is the preview image on Facebook. I was very annoyed with the smug liberals in the comments of the NYT article, crowing about how conservatives are too stupid to understand statistics. But that’s a rant for another day… ↩ -

I’d very quickly go out of business because of the moral hazard and adverse selection built into this product, but that isn’t germane to the example. ↩

-

Or at least, this is my guess as to the most plausible factors in the recidivism rate discrepancy. I think social factors – especially when social gaps are so clear and pervasive – seem much more likely than biological ones. The simplest example of the disparity in policing – and its effects – is the relative rates of being stopped by police during Stop and Frisk given above by Dr. O’Neil. ↩

-

It’s possible that variations in Monoamine oxidase A or some other gene amongst populations might make some populations more predisposed (in a biological sense) to violence or other antisocial behaviour. Given that violence and antisocial behaviour are relatively uncommon (e.g. about six in every one thousand Canadian adults are incarcerated or under community supervision on any given day), any genetic effect that increases them would both be small on a social level and lead to a relatively large skew in terms of supervised populations.

This would occur in the same way that repeat offenders tend to be about one standard deviation below median societal IQ but the correlation between IQ and crime explains very little of the variation in crime. This effect exists because crime is so rare.

It is unfortunately easy for people to take things like “Group X is 5% more likely to be violent”, and believe that people in Group X are something like 5% likely to assault them. This obviously isn’t true. Given that there are about 7.5 assaults for every 1000 Canadians each year, a population that was instead 100% Group X (with their presumed 5% higher assault rate) would see about 7.875 assaults per 1000 people, a difference of about one additional assault per 3500 people.

Unfortunately, if society took its normal course, we could expect to see Group X very overrepresented in prison. As soon as Group X gets a reputation for violence, juries would be more likely to convict, bail would be less likely, sentences might be longer (out of fear of recidivism), etc. Because many jobs (and in America, social benefits and rights) are withdrawn after you’ve been sentenced to jail, formerly incarcerated members of Group X would see fewer legal avenues to make a living. This could become even worse if even non-criminal members of Group X would denied some jobs due to fear of future criminality, leaving Group X members with few overall options but the black and grey economies and further tightening the spiral of incarceration and discrimination.

In this case, I think the moral thing to do as a society is to ignore any evidence we have about between-group differences in genetic propensities to violence. Ignoring results isn’t the same thing as pretending they are false or banning research; we aren’t fighting against truth, simply saying that some small extra predictive power into violence is not worth the social cost that Group X would face in a society that is entirely unable to productively reason about statistics. ↩ -

Although we should be ever vigilant against people who seek to do the opposite and use genetic differences between Ashkenazi Jews and other populations as a basis for their Nazi ideology. As Hannah Arendt said, the Holocaust was a crime against humanity perpetrated on the body of the Jewish people. It was a crime against humanity (rather than “merely” a crime against Jews) because Jews are human. ↩

-

Or at least, you would if I hadn’t warned you that I was about to talk about biases. ↩

-

My next blog post is going to be devoted to what I did like about the book, because I don’t want to commit the mistakes I’ve just railed against (and because I think there was some good stuff in the book that bears reviewing). ↩